Beyond Demos, Toward Decision Intelligence

🚀 Why This Hackathon Actually Matters

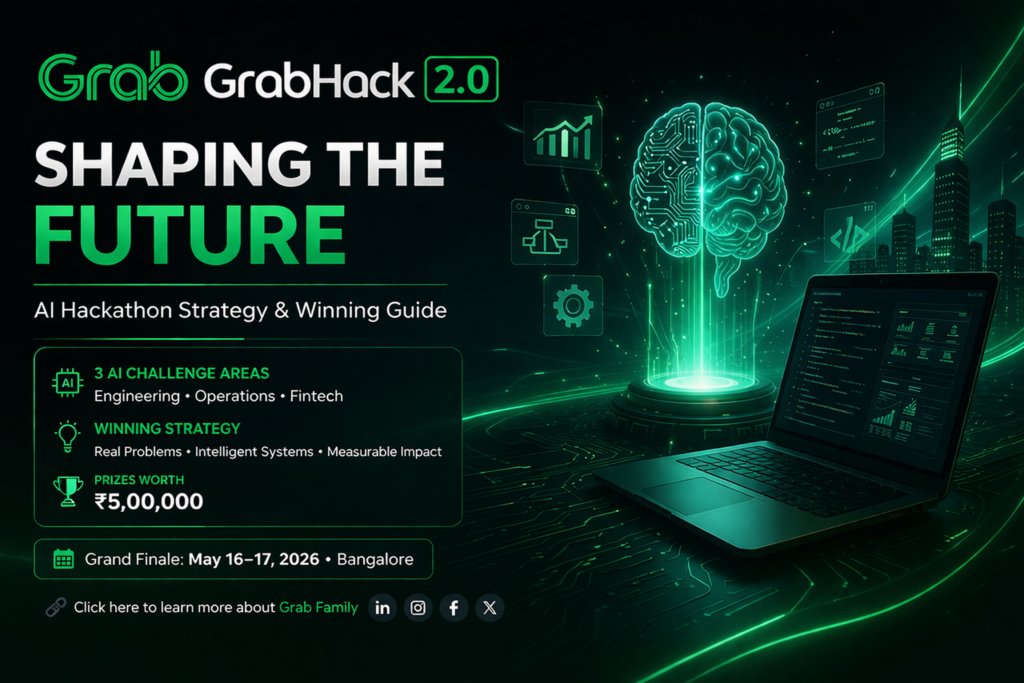

Most hackathons reward velocity over value — but GrabHack 2.0 inverts the equation. It’s not about speed alone. It’s about architecting intelligent systems that solve real business frictions using AI. The shift from quick demos to production-ready thinking is what separates legacy entries from breakthrough solutions.

🧠 What Makes GrabHack 2.0 Different?

Unlike typical hackathons, this one focuses on three high-leverage domains:

- 🤖 AI-powered engineering productivity — tools that make developers faster and reduce toil

- 📊 Intelligent business operations — automation beyond chatbots, driving operational excellence

- 💰 Fintech solutions for real users — inclusive, secure, and impactful financial tools

⚠️ The Problem With Most Hackathon Approaches

Here’s the uncomfortable truth: Most participants will fail — not because they lack skills, but because they approach it wrong. They build UI-heavy demos, overuse AI buzzwords, and skip real problem validation. This leads to weak presentations, no clear impact, and forgettable solutions.

🚫 Mistake 1: AI as a Feature, Not a System

Real intelligence structures data flow and outputs meaningful decisions.

🚫 Mistake 2: Ignoring Real Impact

Judges love numbers: time saved, cost reduced, efficiency improved.

🚫 Mistake 3: Weak Problem Definition

Define constraints, user persona, and frequency of the pain point.

🚫 Mistake 4: Overengineering the Solution

A sharp, narrow AI solution beats an unfinished platform.

💡 A Strong AI Project Direction (Example)

🧠 AI-Powered Incident Intelligence System

Problem: Developers struggle to identify root causes during production failures — average MTTR is high, causing business impact.

Solution: An AI system that analyzes logs + error traces, detects anomaly patterns, suggests probable root causes, and recommends fixes.

Impact: Faster debugging, reduced downtime, improved developer productivity (measurable: 40% reduction in mean time to resolution).

Projects like this win because they show clear business value + AI does meaningful reasoning, not just classification.

⚙️ How to Structure Your Hackathon Solution

To stand out, your submission should articulate each layer:

- 1. Problem Clarity – Specific user pain, frequency, current cost of inaction.

- 2. AI Logic – What data, which model/approach, how processing generates insights.

- 3. System Design – Input layer (data ingestion) → processing (AI/ML core) → output layer (actionable UI/API).

- 4. Business Value – Always answer: why should anyone use this? ROI, adoption, scale.

[ Telemetry / Logs ] → [ Embedding & Anomaly Detector ] → [ Root Cause Classifier ] → [ Slack Alert + Remediation Suggestion ]

🔁 Explainability layer: highlights top 3 error patterns with confidence.

🧩 Think Like a System Designer, Not Just a Coder

Instead of focusing only on building, the winning mindset is to architect for reality and scale. The focus will be on solving a real, repeated problem; keeping the solution scalable; and ensuring AI decisions are explainable. The goal is not just to create a demo, but to build something that could realistically evolve into a production system.

🔥 The Strategic Pivot: Decision-Centric AI

🚫 Generic approach: “We used LLM to generate summaries.”

✔ Elite approach: “Our model classifies incident severity, proposes runbook actions, and estimates blast radius using historical patterns. Reduces cognitive load by 53%.”

Remember: Judges are evaluating impact per unit complexity. A simple but accurate classifier with real business metrics outperforms an over-engineered multi-agent system with no validation.

📈 What You Should Do Before Submitting

Validate ruthlessly. If any answer is unclear, your solution needs refinement:

Pro tip: Record yourself presenting the solution — if the core value isn’t clear within 90 seconds, restructure your narrative.

🎙️ Inside the Judge’s Mind: What Wins Trophies

- ⚡ Real-time adaptation: AI that improves with new data or user feedback (even simulated).

- 📐 Explainability layer: SHAP values, natural language justifications → builds trust.

- 💰 Cost efficiency: Optimized inference, small models, caching strategies → shows maturity.

- 🧩 Developer empathy: Integrates into existing workflows (CLI, IDE, Slack, API).

🚀 Ready to Participate in GrabHack 2.0?

If you’re serious about building something impactful with AI, this hackathon is worth your time.

If yes, you’re already ahead of most participants.

📝 Register Here →Start building your intelligent system today

🎯 Final Thoughts

GrabHack 2.0 is not just another competition — it’s an opportunity to think beyond coding and move towards building intelligent systems with real-world impact. Winning is not just about execution. It’s about clarity, relevance, and usefulness. And those who focus on these will naturally stand out.

Focus on depth over breadth. A polished incident intelligence agent, a smart resource allocator, or a fraud detection microservice with clear decision logic will always surpass a bloated “AI for everything” concept.

🔍 This strategic blueprint is your competitive edge. Align your team around measurable outcomes, explainable AI, and a razor-sharp use case. Build systems that matter.